Responsible use of automated decision systems in the federal government

By: Benoit Deshaies, Treasury Board of Canada Secretariat; Dawn Hall, Treasury Board of Canada Secretariat

Automated decision systems are computer systems that automate part or all of an administrative decision-making process. These technologies have foundations in statistics and computer science, and can include techniques such as predictive analysis and machine learning.

The Treasury Board Directive on Automated Decision-Making ("the Directive") is a mandatory policy instrument which applies to most federal government institutions, with the notable exception of the Canada Revenue Agency (CRA). It does not apply to other levels of government such as provincial or municipal governments. The Directive supports the Treasury Board Policy on Service and Digital and sets out requirements that must be met by federal institutions to ensure the responsible and ethical use of automated decision systems, including those using artificial intelligence (AI).

Data scientists play an important role in assessing data quality and building models to support automated decision systems. An understanding of when the Directive applies and how to meet its requirements can support the ethical and responsible use of these systems. In particular, the explanation requirement and the guidance (Guidance on Service and Digital, section 4.5.3.) from the Treasury Board of Canada Secretariat (TBS) on model selection are of high relevance to data scientists.

Potential issues with automated decisions

The use of automated decision systems can have benefits and risks for federal institutions. Bias and lack of explainability are two areas where issues can arise.

Bias

In recent years, data scientists have become increasingly aware of the "bias" of certain automated decision systems, which can result in discrimination. Data-driven analytics and machine learning can accurately capture both the desirable and undesirable outcomes of the past, and project them forward. Algorithms based on historic data can in some cases amplify race, class, gender and other inequalities of the past. As well, algorithms trained on datasets with a lack of, or disproportionate, representation can impact the accuracy of the systems. For example, many facial recognition systems don't work equally well, depending on the skin, colour or gender of the personFootnote 1, Footnote 2. Another common example is a model to support recruitment developed by Amazon which disproportionately favored male applicants. The underlying issue was identified to be that the model had been trained using the résumés of previous tech applicants to Amazon, who were predominantly menFootnote 3,Footnote 4.

Lack of explainability

Another potential issue with automated systems is when one cannot explain how the system arrived at its predictions or classifications. In particular, it can become difficult to produce an easily understood explanation when the systems grow in complexity, such as when neural networks are usedFootnote 5. In the context of the federal government, being able to explain how administrative decisions are made is critical. Individuals denied services or benefits have a right to a reasonable and understandable explanation from the government, which goes beyond indicating that it was a decision made by a computer. A strong illustration of this problem was seen when an algorithm started reducing the amount of medical care received by patients, leading to consequences that affected peoples' health and well-being. In this case, users of the system could not explain why this reduction occurredFootnote 6.

Objectives of the Directive

The issues described above are mitigated in conventional ("human") decision-making by laws. The Canadian Charter of Rights and Freedoms defines equality rights and precludes discrimination. Core administrative law principles of transparency, accountability, legality and procedural fairness define how decisions need to be made and what explanations must be provided to those impacted. The Directive interprets these principles and protections in the context of digital solutions making or recommending decisions.

The Directive also aims to ensure that automated decision systems are deployed in a manner that reduces risks to Canadians and federal institutions, and leads to more efficient, accurate, consistent and interpretable decisions. It does so by requiring an assessment of the impacts of algorithms, quality assurance measures for the data and the algorithm, and proactive disclosures about how and where algorithms are being used, to support transparency.

Scope of the Directive

The Directive applies to automated decision systems used for decisions that impact the legal rights, privileges or interests of individuals or businesses outside of the government—for example, the eligibility to receive benefits, or who will be the subject of an audit. The Directive came into force on April 1, 2019, and applies to systems procured or developed after April 1, 2020. Existing systems are not required to comply, unless an automated decision is added after this date.

Awareness of the scope and applicability of the Directive can enable data scientists and their supervisors to support their organization in implementing the requirements of the Directive to enable the ethical and responsible use of these systems.

For example, it is important to note that the Directive applies to the use of any technology, not only artificial intelligence or machine learning. This includes digital systems making or recommending decisions, irrelevant of the technology used. Systems automating calculations or implementing criteria that do not require or replace judgement could be excluded, if what they are automating is completely defined in laws or regulations, such as limiting the eligibility of a program to those 18 years or above in age. However, seemingly simple systems could be in scope if they are designed to replace or automate judgement. For example, a system which supports the detection of potential fraud by selecting targets for inspections using simple indicators, such as a person making deposits in three or more different financial institutions in a given week (a judgement of "suspicious behavior"), could be in scope.

The Directive applies to systems that make, or assist in making, recommendations or decisions. Having a person make the final decision does not remove the need to comply with the Directive. For example, systems that provide information to officers who make the final decisions could be in scope. There are multiple ways in which algorithms can make, or assist in making, recommendations or decisions. The list below illustrates some of these, reflecting how automating aspects of the fact finding or analysis process may influence subsequent decisions.

Some of the ways in which algorithms can support and influence decision-making processes:

- present relevant information to the decision-maker

- alert the decision-maker of unusual conditions

- present information from other sources ("data matching")

- provide assessments, for example by generating scores, predictions or classifications

- recommend one or multiple options to the decision-maker

- make partial or intermediate decisions as part of a decision-making process

- make the final decision.

Requirements of the Directive

The following requirements of the Directive are foundational in enabling the ethical and responsible use of automated decision systems. Each section includes a brief description of the requirement and relevant examples that can enable their implementation.

Algorithmic Impact Assessment

It is important to understand and measure the impact of using automated decision systems. The Algorithmic Impact Assessment Tool (AIA) is designed to help federal institutions better understand and manage the risks associated with automated decision systems. Completing an AIA before production and when system functionality changes is required by the Directive.

The AIA provides the impact level for a system based on the responses federal institutions provide to a number of risk and mitigation questions, many of which are of high relevance to data scientists and their supervisors. This includes questions on potential risks related to the algorithm, the decision, and the source and type of data, as well as mitigation efforts such as consultation and the identification of processes and procedures in place to assess data quality.

The output of the AIA assigns an impact level ranging from Level I (little impact) to Level IV (very high impact). For example, a simple system deciding on the eligibility of receiving a $2 rebate for purchasing an energy-efficient light bulb might be Level I, whereas a complex neural network incorporating multiple data sources deciding to grant a prisoner parole would be a Level IV. The impact assessment is multi-faceted and was established through consultations with academia, civil society and other public institutions.

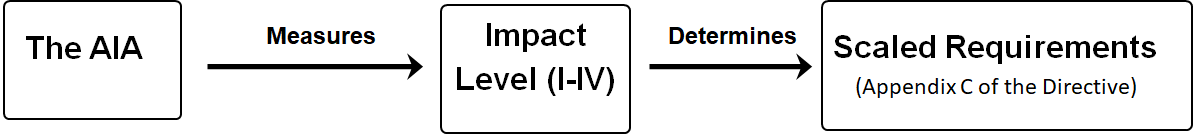

The impact level determined by the AIA supports the Directive in matching the appropriate requirements with the type of application being designed. While some of the Directive requirements apply to all systems, others vary according to the impact level. This ensures that the requirements are proportional to the potential impact of the system. For instance, at impact Level I decisions can be fully automated, whereas at Level IV the final decision must be made by a person. This supports the Directive requirements on "Ensuring human intervention" for more impactful decisions.

Description - Figure 1

The impact level calculated by the AIA determines the scaled requirements of the Directive.Finally, the Directive requires publication of the final results of the AIA on the open government portal—an important transparency measure. This serves as a registry of automated decision systems in use in government, informs the public of when algorithms are used, and provides basic details about their design and the mitigation measures that were taken to reduce negative outcomes.

Transparency

The Directive includes a number of requirements aimed at ensuring that the use of automated decision systems by federal institutions is transparent. As mentioned above, publication of the AIA on the open government portal serves as a transparency measure. As clients will rarely consult this portal before accessing services, the Directive also requires that a notice of automation be provided to clients through all service delivery channels in use (Internet, in person, mail or telephone).

Another requirement which supports transparency, and is of particular relevance to data scientists, is the requirement to provide "a meaningful explanation to affected individuals of how and why the decision was made". This article presented how certain complex algorithms will be more difficult to explain, making this requirement more difficult to meet. In its guidance, TBS says to favor "easily interpretable models" and "the simplest model that will provide the performance, accuracy, interpretability and lack of bias required", distinguishing between interpretability and explainability (Guideline on Service and Digital, section 4.5.3.). This guidance is aligned with the work of others on the importance of interpretable models such as RudinFootnote 7 and MolnarFootnote 8.

Likewise, when the source code is owned by the Government of Canada, it needs to be released as open source, where possible. In the case of proprietary systems, the Directive requires that all versions of the software are safeguarded to retain the right to access and test the software and to authorize external parties to review and audit these components as necessary.

Beyond the publication of source code, additional transparency measures support the communication of the use of automated decision systems to a broad audience. Specifically, at impact levels III and IV, the Directive requires the publication of a plain language description of how the system works, how it supports the decision and the results of any reviews or audits. The latter could include the results of Gender-based Analysis Plus, Privacy Impact Assessments, and peer reviews, among others.

Quality assurance

Quality assurance plays a critical role in the development and engineering of any system. The Directive includes a requirement for testing before production, which is a standard quality assurance measure. However, given the unique nature of automated decision systems, the Directive also requires development of processes to test data for unintended biases that may unfairly impact the outcomes, and ensure that the data are relevant, accurate and up-to-date.

Quality assurance efforts should continue after the system is deployed. Operating the system needs to include processes to monitor the outcomes on a scheduled basis to safeguard against unintentional outcomes. The frequency of those verifications could depend on a number of factors, such as the impact and volume of decisions, and the design of the system. Learning systems that are frequently retrained may require more intense monitoring.

There is also direct human involvement in quality assurance, such as the need to consult legal services, provide human oversight of decisions with higher levels of impacts (often referred to as having a "human-in-the-loop"), and ensure sufficient training for all employees developing, operating and using the system.

Finally, the Directive requires a peer review from a qualified third party. The goal of this review is to validate the algorithmic impact assessment, the quality of the system, the appropriateness of the quality assurance and risk mitigation measures, and to identify the residual risk of operating the system. The peer review report should be considered by officials before making the decision to operate the system. A collaboration between TBS, the Canada School of Public Service and the University of Ottawa resulted in a guide proposing best practices for this activityFootnote 9.

Conclusion

The automation of service delivery by the government can have profound and far reaching impacts, both positive and negative. The adoption of data-driven technologies presents a unique opportunity to review and address past biases and inequalities, to build a more inclusive and fair society. Data scientists have also seen that automated decision systems can present some issues with bias and lack of explainability. The Treasury Board Directive on Automated Decision-Making provides a comprehensive set of requirements that can serve as the basic framework for the responsible automation of services and for preserving the basic protection of law in the digital world. In administrative law, the degree of procedural fairness for any given decision-making process increases or decreases with the significance of that decision. Likewise, the requirements of the Directive scale according to the impact level calculated by the algorithmic impact assessment.

Data scientists of the federal public service can play a leadership role in this transformation of government. By supporting the Directive in ensuring that decisions are efficient, accurate, consistent and interpretable, data scientists have the opportunity to identify ways to improve and optimize service and program delivery. Canadians also need data scientists to lead efforts to identify undesired biases in data, and support the responsible adoption of automation by building interpretable models, providing the transparency, fairness and explainability required.

Note: Stay tuned for an upcoming article on Statistics Canada’s Responsible Machine Learning Framework. The Data Science Network welcomes submissions for additional articles on this topic. Don’t hesitate to send us your article!

Team members

Benoit Deshaies, Dawn Hall

- Date modified: